Rugby World Cup explainer using data

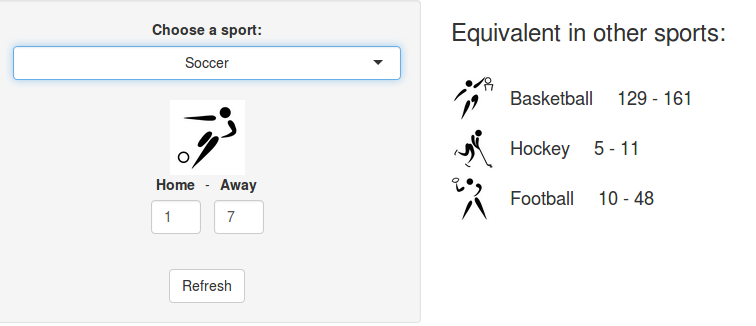

Last week, a stereotypical “French” ceremony opened the 10th Rugby World Cup in Stade de France, in the suburbs of Paris, France. As a small boy growing up in the southern half of France, I developed a strong interest for the sport. Now being an adult living and working in North America, where barely anyone has ever heard the word “Rugby”, I now rarely have anyone else to talk to about Antoine Dupont’s (captain of the French team and best…